The Online Safety Act, Nine Months In: What Internet Matters' New Report Says

Internet Matters has published the first substantial read on whether the Online Safety Act is actually doing what it was meant to do. The full report (PDF), titled Are children safer online?, draws on a survey of 1,270 UK children aged 9 to 16 and their parents, run in the weeks immediately after the Protection of Children Codes came into force on 25 July 2025, and seven follow-up focus groups in February. It’s the closest thing we have to a baseline reading of the Act’s effect on families.

The headline conclusion is measured rather than damning. Families are noticing the safety features the Act was supposed to produce. They are also bypassing them, watching their children encounter harmful content at concerning rates, and asking why the things they most worry about (screen time, AI-generated content) are not being addressed at all. For anyone working in schools, the gaps are familiar.

What families are noticing

Roughly seven in ten children (68%) and parents (67%) say they have seen more visible safety features on the platforms their children use: improved reporting tools, content filters, and restrictions on functions like live streaming or chat. 64% of parents have noticed new or improved parental controls. About half of children (53%) say they have recently been asked to verify their age, most commonly when setting up new accounts.

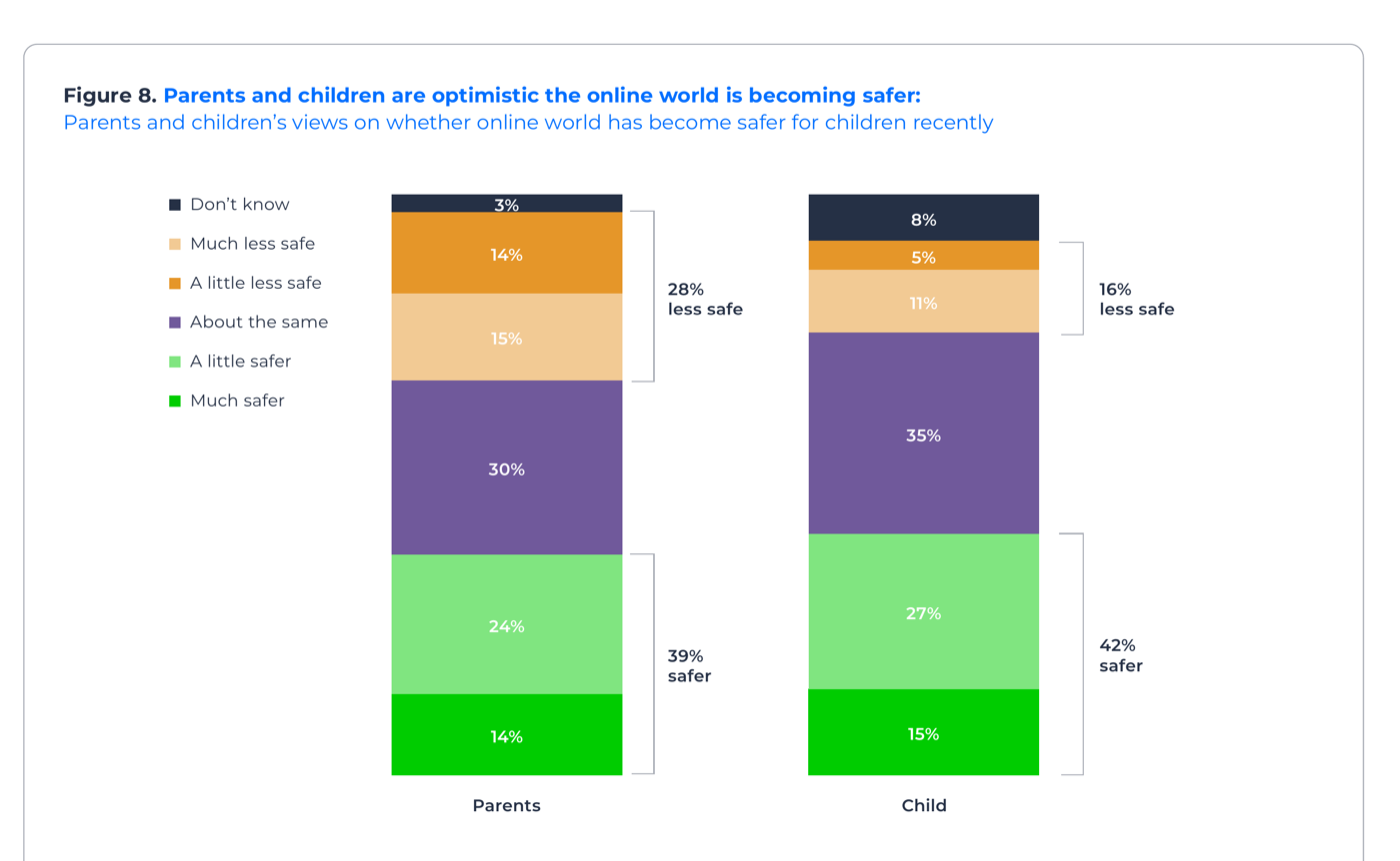

Where children have noticed changes, they are broadly in favour of them. 90% support the improved blocking and reporting flows, and three quarters welcome restrictions on contacting strangers or accessing risky features like live chat. 39% of parents and 42% of children think the online world has become safer recently, although 28% of parents and 16% of children think the opposite.

This is genuine progress, and it’s worth saying so. The Act has produced visible changes on the surfaces children actually use, and they can tell. Reporting tools are a particular bright spot, given Internet Matters’ earlier work showing that children often gave up on reporting because the process was too complex and they didn’t trust platforms to act.

Where it isn’t landing

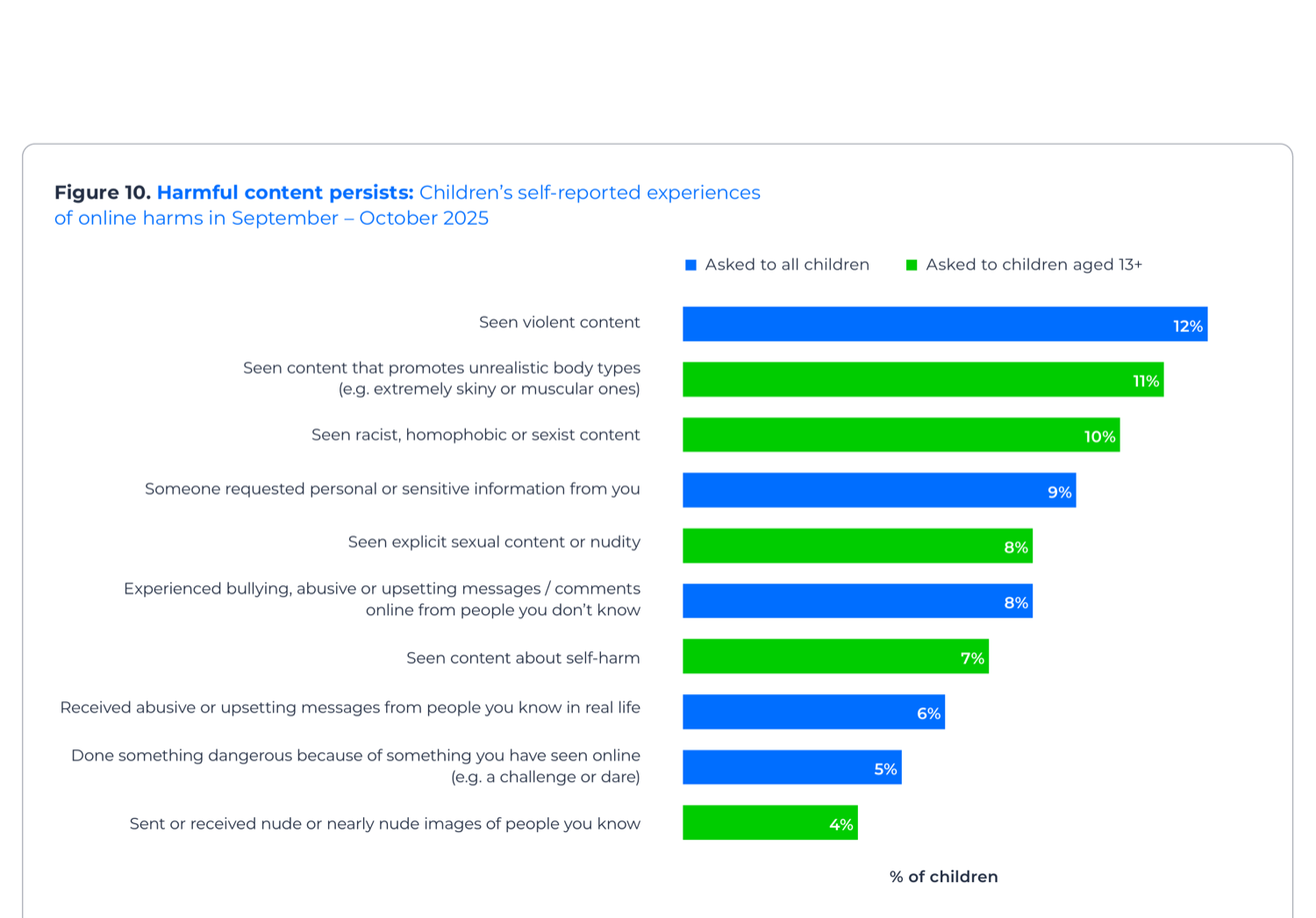

The same report is honest about what hasn’t shifted. 49% of children said they had experienced harm online in the past month: 12% had seen violent content, 11% content promoting unrealistic body types, 10% racist, homophobic or sexist content, 9% had been asked for personal information, 8% had seen explicit sexual content. All of these are categories the Children’s Safety Codes are supposed to prohibit. The focus groups include a 14-year-old describing seeing the Charlie Kirk assassination video on Snapchat and breaking down in tears.

Age verification, which is doing a lot of the structural work in the Act, is the area families are most sceptical about. 46% of children think age checks are easy to bypass; only 17% find them difficult. A third (32%) have personally bypassed one, mostly through a fake birthdate (13%) or by using someone else’s login (9%). Focus group participants described drawing on facial hair to fool facial age estimation, or submitting a video of another person (including, in one case, a cartoon character) to age estimation tools.

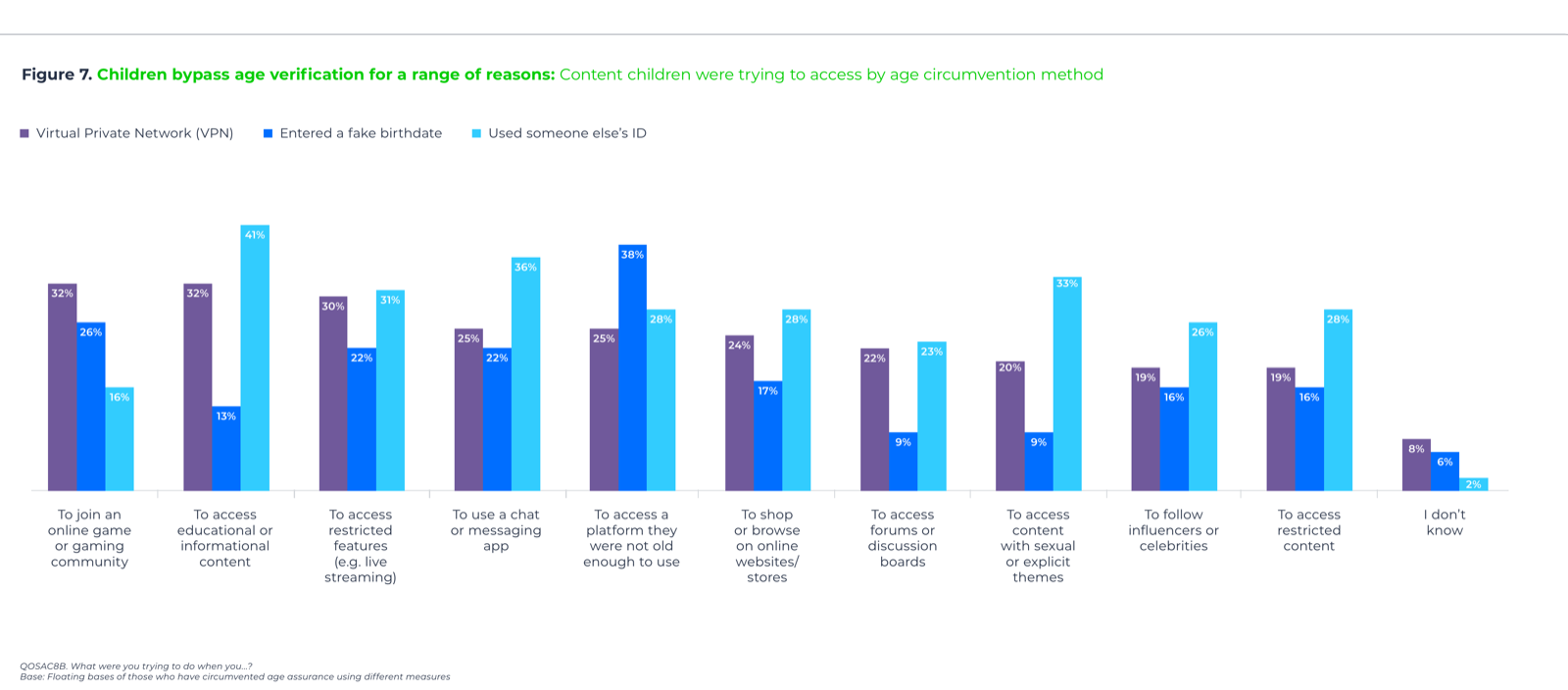

What children were trying to access is worth pausing on. The most common reason for bypassing an age check was to reach educational or informational content (32% via VPN, 41% via someone else’s ID). The blunt instrument of an age gate is, in many cases, just blocking children from the open web. The second largest categories are gaming, restricted features like live streaming, and messaging apps. Sexual content is in there, but it isn’t the largest bucket. Schools talking to parents about this need to be specific about which risk they are trying to mitigate, because the same workaround is being used for very different reasons.

The part that should give schools pause: 26% of parents have allowed their child to bypass an age check, with 17% actively helping. The legislation assumes parents are the last line of defence. A quarter of them are the workaround.

The two gaps the Act doesn’t really touch

Two things keep coming up in the focus group quotes, and neither is well addressed by the current regulatory framework.

The first is time spent online. Parents and children both describe this as their most immediate, day-to-day concern. The report quotes a 12-year-old saying her screen time is eight hours a day and a 16-year-old saying she’s on her phone at 3am on school nights. The Act has nothing meaningful to say about persuasive design, infinite scroll, autoplay or streak mechanics. These are commercial choices, and the Act largely leaves them alone.

The second is AI-generated content. The Act predates the current generation of generative AI and, as Internet Matters notes, doesn’t sit easily over it. 63% of children in earlier Internet Matters research said they were worried about AI-generated content and fake news. 27% have seen a fake or AI-generated news story and believed it. Separate Internet Matters research on nude deepfakes found that 13% of children have encountered one, and 55% are more worried about a fake nude image of themselves being shared than a real one. I’ve written before about how quickly accountability for AI-related harm slides onto the school when something goes wrong. The Act, as it stands, isn’t going to slow that down.

What this means for schools

A few things stand out from a school perspective.

The first is that schools are explicitly in the recommendations. Internet Matters argues that media literacy should be delivered “at scale” through the curriculum, with proper guidance, training and resources for teachers. That’s a familiar ask and a familiar resourcing problem. If government takes the recommendation seriously, expect new statutory or near-statutory expectations on schools to teach this material properly, much as we’ve just seen with phone policies becoming law. Plan for that, rather than reacting to it.

The second is that the safeguarding picture is shifting, not improving. Half of children encountering harm in a given month is the baseline now, not the failure case. The Act may reduce some of that over time, but the report is clear that the bigger drivers, persuasive design and AI, remain largely unregulated. Safeguarding leads should expect to be dealing with deepfake incidents, AI-generated bullying material and algorithmic content exposure as a routine workload, not as edge cases.

The third is enforcement. Ofcom has issued 16 fines totalling nearly £4 million as of March 2026. That’s not negligible, but it’s also not a deterrent at platform scale. Only 22% of parents and 31% of children believe the government is doing enough. The political pressure for sharper enforcement is going to keep building, and the report explicitly calls for it.

Where I land

This isn’t a report that says the Online Safety Act has failed. It says, more carefully, that the Act has produced visible changes families can see, that those changes are broadly welcomed, and that the most urgent concerns of parents and children sit outside what the Act was designed to do. Both things are true.

For schools, the most useful part of the full report is the section on shared responsibility. Parents do not think they can keep children safe online on their own, and they’re right. The realistic picture is one where government, platforms, parents and schools each carry a portion of the load. Schools are, by some distance, the institution best placed to teach the media literacy piece, and the report says so plainly. Whether the curriculum and the funding follow is the next question.